If you run a startup, this is the kind of week that quietly rearranges your strategy deck. Not because some shiny benchmark nudged up 2 points. Not because another lab dropped a press release with cinematic screenshots.

But because multiple open models just hit enough capability, across enough device sizes to make one dangerous idea suddenly practical: you may not need to keep paying the closed-model tax forever.

🎯 The headline everyone wants to believe

30 people outperformed OpenAI for $20M. It is a magnetic sentence. It compresses rebellion, efficiency, and startup vengeance into nine words. It also deserves a proper fact-check before you build a worldview around it.

$20M total effort: Arcee’s official Trinity Large post says the full effort including compute, salaries, data, storage, and ops was completed for $20 million.

33-day pretraining run: Arcee’s official post says the full Trinity Large pretraining run finished in 33 days on 2,048 Nvidia B300 GPUs.

Translation for founders: the slogan is slightly overcooked. The underlying shift is not. And the underlying shift is the part that can make you money.

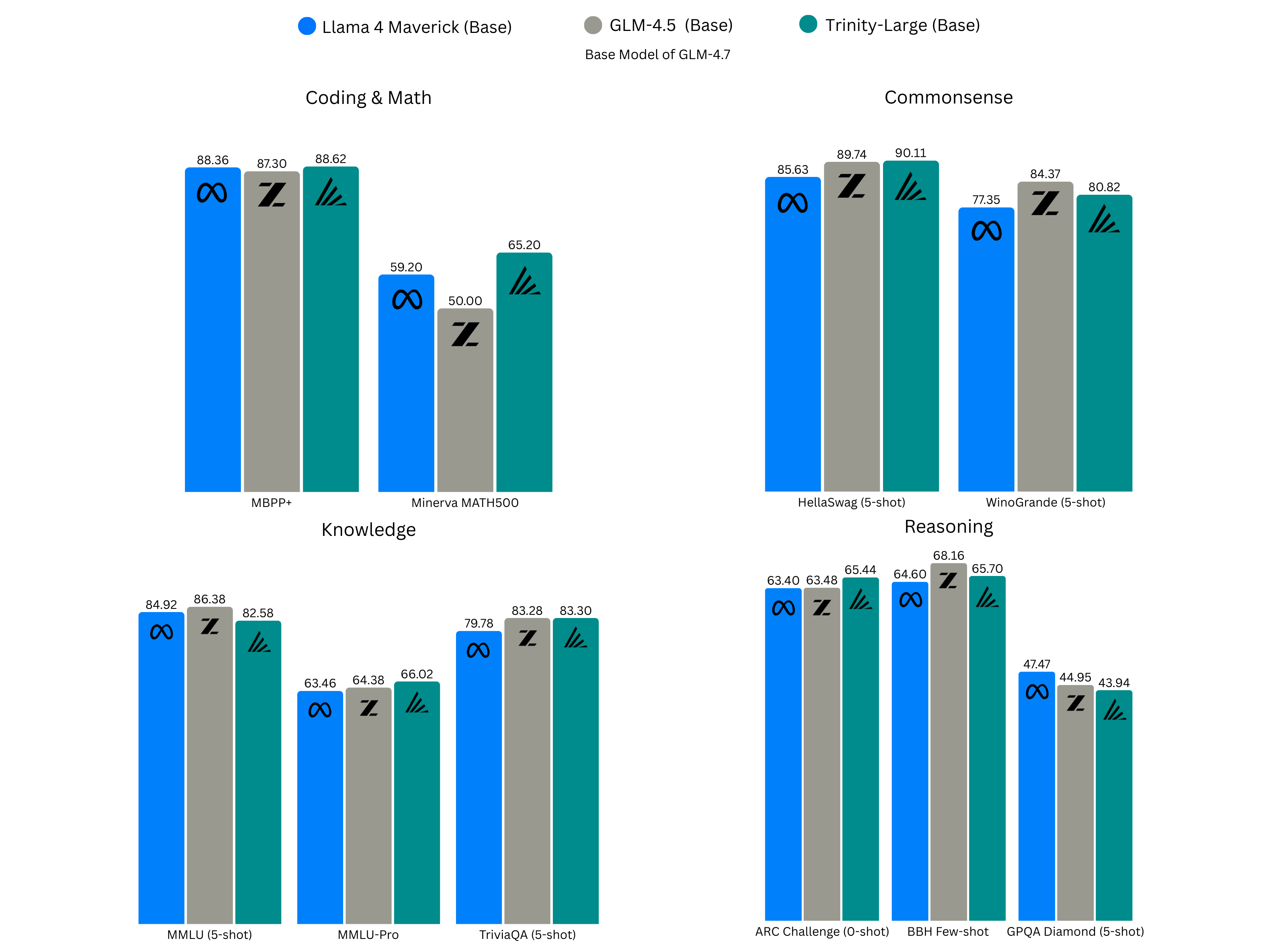

Benchmark graphic shown in Arcee’s official Trinity Large announcement

The deeper story: four open models just attacked the stack from every angle

The Neuron’s bigger thesis is that the hierarchy cracked across every scale: tiny on-device models, compressed local models, agentic desktop models, and frontier-class reasoning models all advanced at once.

That matters because it means founders now have more than a single open-model option. They have an architecture menu.

Gemma 4: open power for the product layer

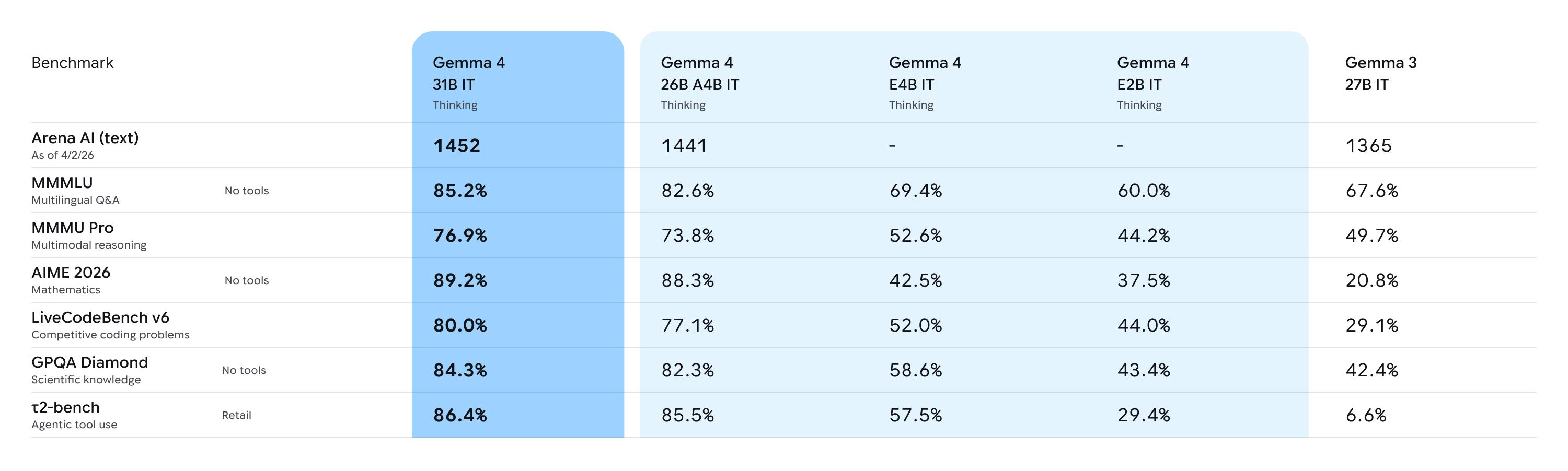

Google says Gemma 4 ships in four sizes: E2B, E4B, 26B MoE, and 31B Dense. The edge variants offer 128K context; the larger variants go up to 256K. Google also says the 31B version ranks #3 among open models on the Arena AI text leaderboard, and that Gemma 4 is released under Apache 2.0..

Founder implication: you can aim higher on private copilots, embedded agents, and on-device product experiences without automatically defaulting to a closed API.

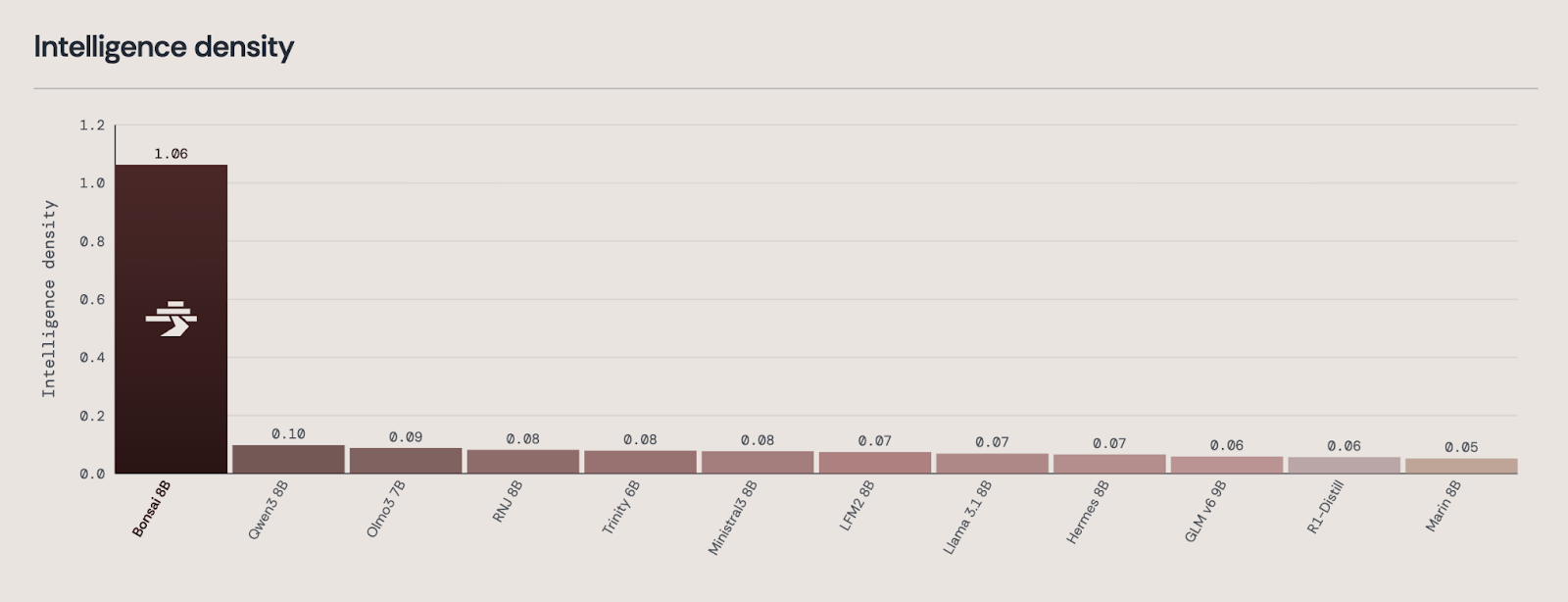

1-bit Bonsai 8B: compression becomes strategy

PrismML says Bonsai 8B spans 8.2B parameters but weighs just 1.15 GB, roughly 14x smaller than comparable 16-bit 8B models. PrismML also reports roughly 44 tokens/sec on an iPhone 17 Pro Max, 131 tokens/sec on an M4 Pro Mac, and 368 tokens/sec on an RTX 4090.

Founder implication: distribution just got weird. If intelligence fits on the edge, your product can feel instant, private, and dramatically cheaper to run.

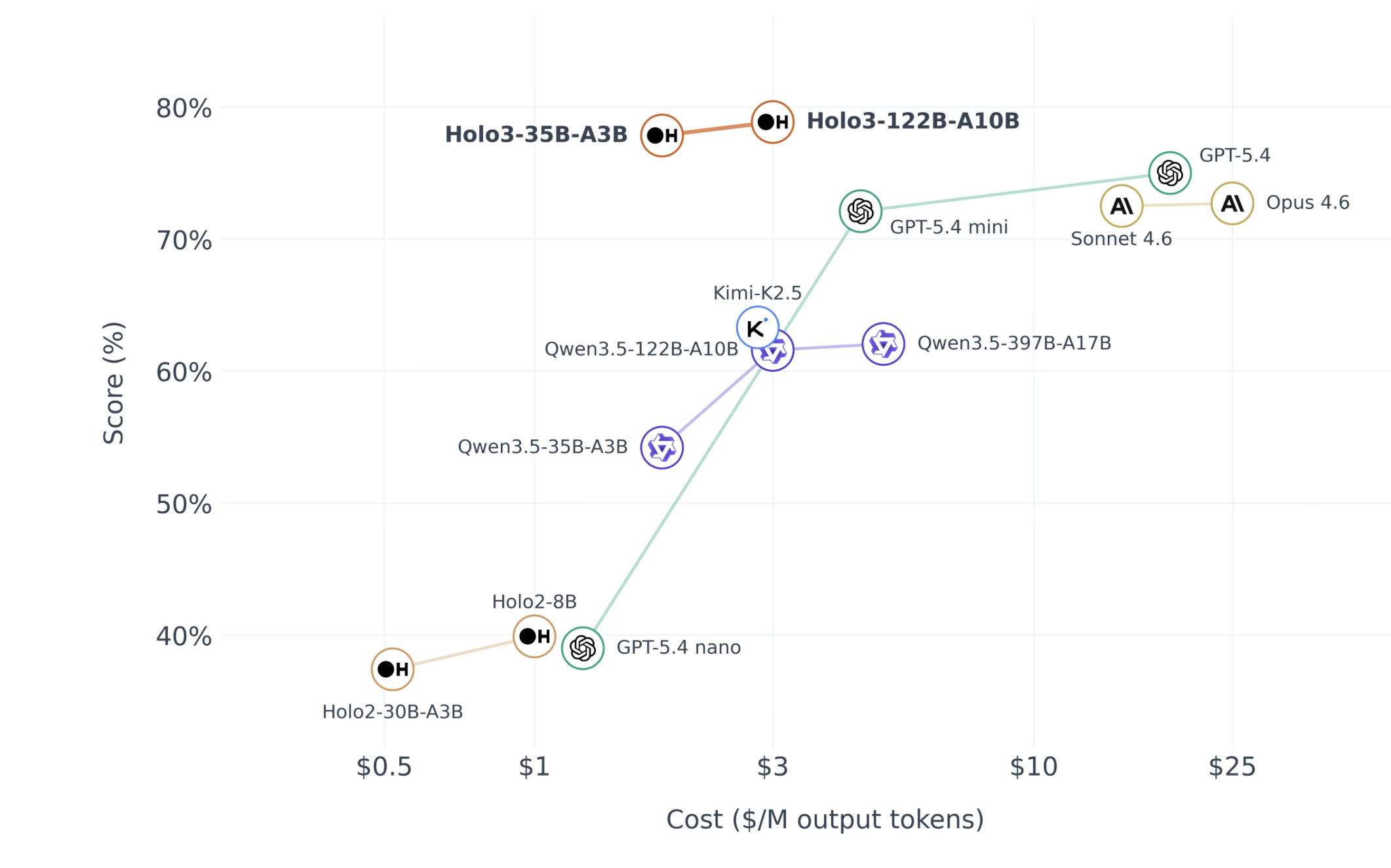

Holo3: agents get enterprise teeth

H Company says Holo3-122B-A10B scored 78.85% on OSWorld-Verified. It also says the model uses 122B total parameters with only 10B active, and that the company’s internal corporate benchmark contains 486 multi-step tasks.

Founder implication: if your startup automates desktop workflows, RPA, or ugly multi-app business processes, open agents are moving from toy to serious infrastructure.

Trinity-Large-Thinking: frontier ambition gets cheaper

Arcee says Trinity-Large-Thinking is released under Apache 2.0, ranked #2 on PinchBench, and is priced at $0.90 per million output tokens on its API. Arcee also says the earlier Preview model served 3.37 trillion tokens on OpenRouter in its first 2 months.

Founder implication: if quality is approaching frontier territory while economics collapse, the winners won’t be the people with the biggest burn they’ll be the ones with the clearest wedge.

Gemma 4 model family chart from Google’s official announcement.

Snippet worth stealing for your own strategy memo

Open models are no longer one thing. They are becoming a full-stack design choice: tiny where latency matters, compressed where distribution matters, agentic where workflows matter, and frontier-scale where reasoning depth matters.

Now let’s zoom in on the story that should wake up every small startup owner

Forget the meme. The real Arcee story is this: a comparatively small company took a shot at pretraining a 400B-parameter sparse MoE model, finished the pretraining run in 33 days, trained on 17T tokens, used 2,048 Nvidia B300 GPUs, and says the full effort cost $20M.

For a founder brain, that is not just a technical anecdote. It is a pricing signal about where frontier capability is going.

That doesn’t mean every startup can suddenly train a frontier model in-house. Obviously not. But it does mean the old psychological barrier “only the mega-labs can even play this game” is taking damage.

\When that barrier breaks, markets repricing usually follow. Infrastructure repricing. Talent repricing. Product expectation repricing. Even investor narrative repricing.

Benchmark image shown in Arcee’s Trinity-Large-Thinking release.

Why this should light a fire under founders

1) Your gross margin story just changed

For many startups, “AI innovation” actually meant reselling someone else’s API with prettier UX. That worked while customers were amazed. It gets uglier when usage scales.

If open models keep improving, your path to margin expansion becomes much more real: self-host the right layer, fine-tune for your wedge, keep premium closed calls only where absolutely necessary.

2) Ownership becomes a product feature

Apache 2.0 matters because it reduces legal friction. Google explicitly says Gemma 4 is under Apache 2.0. Arcee says Trinity-Large-Thinking is under Apache 2.0.

Holo3’s open-weight 35B model is available under Apache 2.0. That means startups can build products around them with fewer licensing headaches.

3) Small teams can now look bigger than they are

When one compressed model runs on-device, one reasoning model handles premium workflows, and one agent model automates ugly back-office actions, a lean startup can ship the illusion of a giant company.

That illusion is where category theft begins.

4) Defensibility shifts to workflow depth

The more model access commoditizes, the more the moat moves into proprietary data loops, vertical distribution, human-in-the-loop system design, and the speed at which you can operationalize AI inside one painful use case.

Exclusive angle

The next breakout AI startup probably won’t win because it found a magically better model. It will win because it used a now-cheaper model stack to dominate one niche before everyone else realized the stack had become affordable.

🚀 What I would not say even though it sounds amazing on X

I would not tell your readers that a 30-person team definitively beat OpenAI. That statement is too loose. It overstates what the primary sources currently support, and sharp readers will catch it.

The stronger line is this: a relatively small startup showed that frontier-class open-model ambition is no longer reserved for the richest labs on earth and that matters more than one viral benchmark dunk.

The founder playbook hiding inside this moment

Step 1 — Stop asking “Which model is best?”

Ask: which parts of my product need edge speed, which parts need cheap volume, which parts need deep reasoning, and which parts need desktop action?

Step 2 — Use closed models surgically, not emotionally.

Keep the premium API only where it truly changes output quality or conversion. Everywhere else, the margin story is too important to ignore.

Step 3 — Build a wedge around ownership.

Privacy, latency, controllability, auditability, and custom post-training are becoming real go-to-market advantages — especially for B2B founders.

Step 4 — Treat benchmarks as clues, not religion.

A benchmark can signal direction. It cannot replace product truth. Your real test is whether the model reduces friction in one expensive workflow customers already hate.

Step 5 — Move before consensus forms.

Once everyone agrees open models are “good enough,” the easy arbitrage is gone. The money is in acting while the narrative still sounds slightly unbelievable.

PrismML’s chart illustrating “intelligence density” for Bonsai 8B

Holo3 visual from H Company’s official launch page.

The punchline

The sexy version of this story is that a tiny rebel lab embarrassed the giants. The strategically useful version is better: the cost of owning serious AI capability is falling across the stack. And when infrastructure costs fall, founders who move early get to look clairvoyant later.

So yes, be excited. Be fired up. But be precise. The verified story is already strong enough without exaggeration:

🔮 The Bottom Line

if you are still thinking about AI as “which premium API should I plug in?”, you are playing the old game.

The new game is deciding what intelligence you should own, what intelligence you should rent, and how fast you can turn that mix into a wedge nobody else in your market saw coming.

📧 Forward this to 3 entrepreneur friends who need to see this opportunity