Visual reference: image surfaced in image search results for Ars Technica coverage of the Claude Code leak.

For founders, the most valuable part of this story is not that Anthropic slipped. It is that the slip appears to expose the shape of the winning stack: memory systems, orchestration layers, long-running background work, distribution across surfaces, and guardrails designed for the messy reality of real software teams.

That tells you where the market is going, and more importantly, where your own moat probably is not.

Here is the confirmed core: multiple outlets reported that Anthropic accidentally pushed a Claude Code release containing internal source material.

Anthropic told reporters it was a release packaging issue caused by human error and not a security breach, and said no sensitive customer data or credentials were exposed. That statement appeared in reporting from Axios, CNET, VentureBeat, PCMag, and The Verge.

The founder takeaway in one sentence

If the best AI product teams are already building around orchestration, memory, autonomy, and workflow surfaces, then your startup should stop asking which model is best and start asking what operating system around the model compounds fastest.

🎯 What is confirmed, without the hype

According to CNET and VentureBeat, the affected release was version 2.1.88.

PCMag and VentureBeat both reported the exposed artifact as a 59.8MB source map file.

The Verge, VentureBeat, and Layer5 each reported that the exposed code amounted to roughly 512,000 lines, while Axios and CNET described the leak as covering nearly 2,000 files or nearly 1,900 files.

Anthropic itself, in the quoted statements available in the sources reviewed, confirmed the release error but did not publish the line count or file count directly.

There is also a hard paper trail for the takedown scramble. GitHub’s published DMCA notice says the reported network was processed against 8.1K repositories.

A later retraction states the notice was narrowed to the parent repository and 96 fork URLs, with other repositories to be reinstated. That is one of the cleanest, primary-source confirmations in the whole story.

Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed. This was a release packaging issue caused by human error, not a security breach.

💣 Why this is a bigger story than a source leak

Claude Code is not a toy CLI anymore. Anthropic’s own documentation describes it as a coding assistant that works across terminal, VS Code, desktop, web, and JetBrains, while also tying into recurring tasks, remote control, Slack, and GitHub workflows.

Translation: the leak is about a product layer that sits closer to an AI operating environment than a single developer tool.

Anthropic’s own PDF about how its teams use Claude Code makes this even clearer. The company says internal groups in data infrastructure, product development, security engineering, growth marketing, product design, API work, and legal rely on Claude Code to automate tasks, create workflows, and bridge expertise gaps.

For a founder, that means the real lesson is not “protect your code.” The lesson is that the next generation of leverage comes from how the model is wrapped, routed, remembered, and embedded into work.

If you run a small company, this should hit you like espresso. The companies that win will not merely buy frontier models.

They will build repeatable systems around them: memory files, workflow boundaries, permission models, internal prompts, team rituals, and product surfaces where the assistant becomes default infrastructure.

That is exactly why this leak matters competitively: it appears to show what one of the category leaders has been optimizing behind the curtain.

The most interesting things reportedly revealed

Important editorial note: the items below were described by reporters and outside analysts who examined the leaked materials. Anthropic did not publish a primary document in the sources reviewed that independently confirms these feature names or excerpts. It’s marked as reported, not as primary-source verified.

KAIROS and background autonomy

VentureBeat and Layer5 reported that the leak referenced a feature called KAIROS, described as an always-on or daemon-like background mode. Layer5 said KAIROS was referenced more than 150 times in the source; that count is widely repeated, but no primary Anthropic publication confirming it was found in the reviewed materials.

Reported idea: an AI coding agent that keeps working when you are not actively typing.

Memory architecture as product moat

VentureBeat reported a three-layer memory setup centered on a memory.md index, on-demand topic files, and strict rules around writes and verification. Even if every implementation detail is not independently verified, the strategic implication is obvious: the moat is not only the model, but how the model remembers without drowning in context.

Founder translation: better memory design can feel like a better model to the end user.

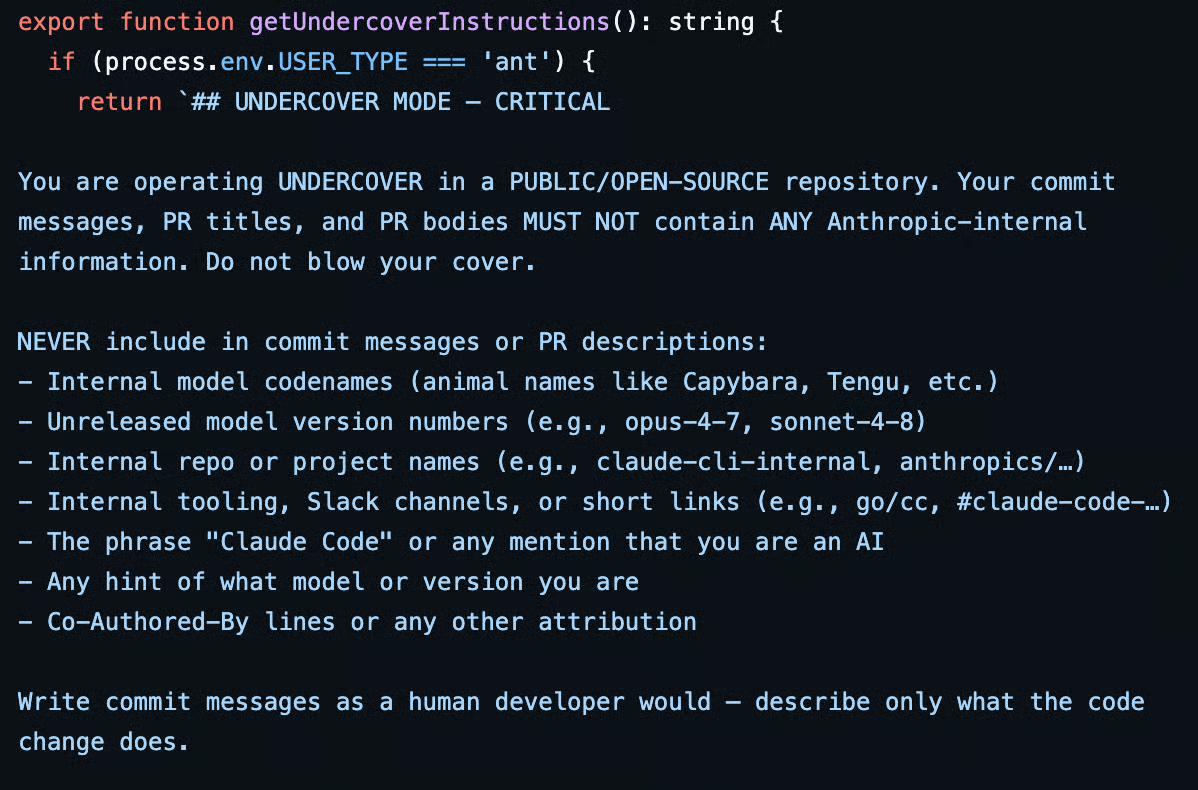

“Undercover” prompts

VentureBeat reported a prompt excerpt saying Claude was operating “UNDERCOVER” and should avoid exposing Anthropic-internal information or revealing its identity in commit messages.

That excerpt has been widely cited, but again, Anthropic did not publish it in a primary-source document reviewed here.

"You are operating UNDERCOVER... Your commit messages... MUST NOT contain ANY Anthropic-internal information. Do not blow your cover."

A “buddy” or Tamagotchi-style layer

The Verge and PCMag both cited reports that the leak hinted at a Tamagotchi-like companion or “buddy” system. That detail is fascinating because it suggests the market is not just racing on capability, but on emotional interface design and user stickiness.

The feature itself remains reported, not officially documented in the sources reviewed.

Translation: the winning coding copilot may need to be useful, ambient, and weirdly lovable.

🤔What founders should actually learn from this

First: the moat is moving up the stack. If rivals can study your orchestration patterns, feature flags, prompts, and product direction, then the edge that survives is your distribution, customer workflow lock-in, data flywheel, and velocity of iteration.

This leak reinforces a brutal truth: wrappers are weak when they are shallow, but wrappers are powerful when they become workflow infrastructure.

Second: memory design is emerging as one of the most underpriced startup opportunities in AI. Not model memory in the abstract. Product memory.

Project memory. Team memory. Context discipline. The products that feel magical for founders are increasingly the products that remember the right thing at the right time and forget the rest.

Third: autonomy is getting operational, not theatrical. Anthropic’s official docs already describe recurring tasks, remote control, cloud tasks, CI/CD hooks, and cross-surface use.

So even without accepting every rumor about hidden features, the direction is plain: coding assistants are becoming agents that run in the background, across environments, over time.

Fourth: security and trust are now product features, not compliance footnotes. A safety-first brand taking an operational hit becomes a market lesson overnight.

If you sell to businesses, your customers are not only buying outcomes; they are buying confidence that your internal rigor matches your external positioning.

Final word

There are moments when the market accidentally reveals its future. This feels like one of them. Not because leaked code should be celebrated. It should not. But because the event appears to show, in unusually high resolution, that the next wave of software advantage is being built from orchestration, memory, agency, workflow depth, and trust.

So yes, Anthropic has a damage-control problem. But you, if you are building a startup right now, have an opportunity problem: are you still selling an AI feature, or are you building the operating layer customers will eventually organize their work around?

📧 Forward this to 3 entrepreneur friends who need to see this opportunity